Introduction

A good Agentforce demo is easy to love. It can answer questions, summarize cases, trigger actions, and create the sense that autonomous work is finally here.

What is harder—and far more important—is the last mile. The distance between a polished demo and a deployment your business will trust with revenue, service, compliance, and customer experience.

In most enterprises, that gap is not caused by model quality alone. It is caused by fragmented context, disconnected workflows, and weak governance across systems. Analyst outlook reinforces the opportunity—and why operational rigor matters. For example, Gartner predicts that by 2029, agentic AI will autonomously resolve a large share of common customer service issues without human intervention. Gartner

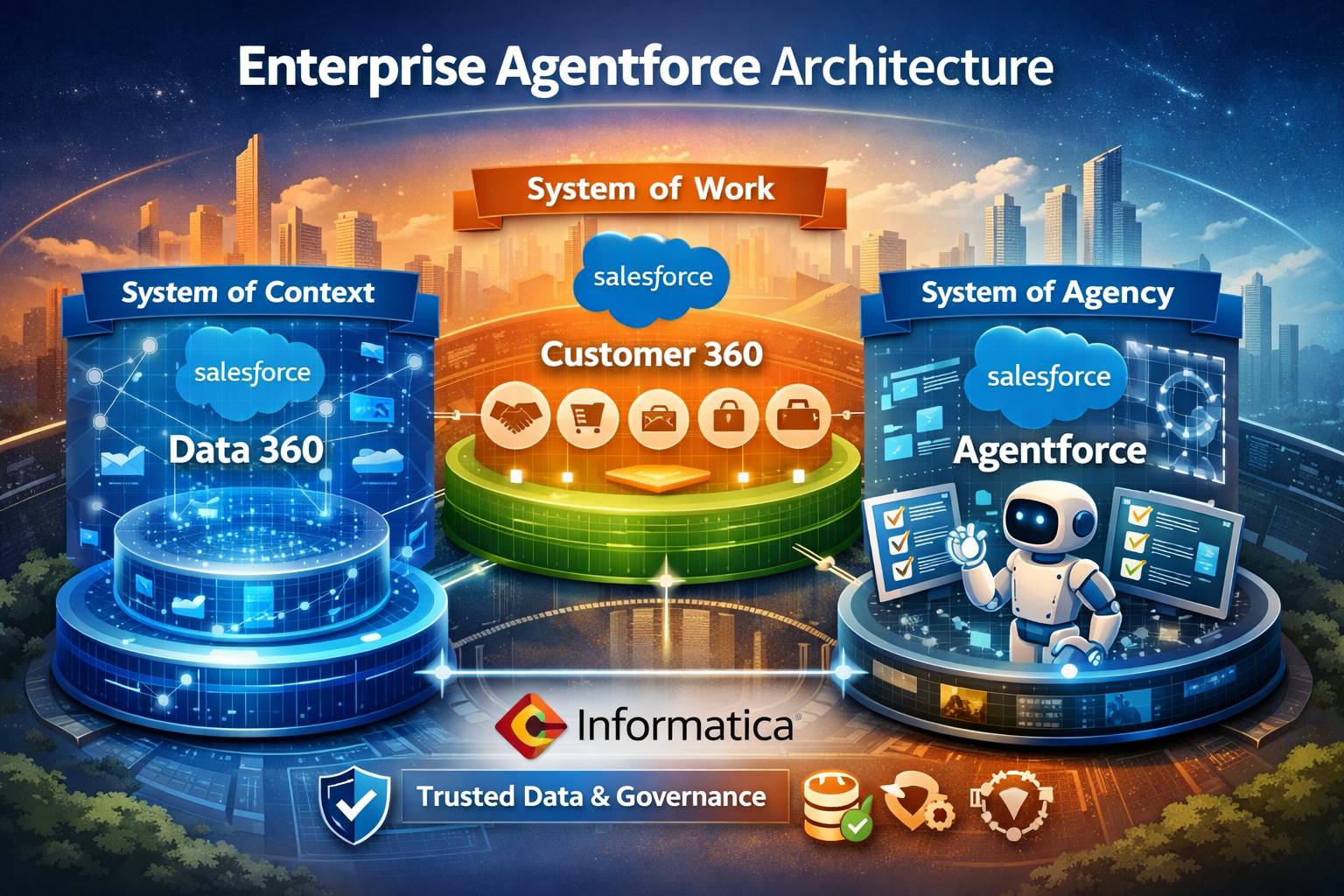

For IT leaders,the winning pattern is not “add an AI agent and hope.” It is to build for three distinct but connected layers: The three-system architecture behind enterprise Agentforce

1) System of context: Salesforce Data 360

Salesforce positions Data 360, formerly Data Cloud, as the real-time data engine that unifies fragmented data from Salesforce and external systems into trusted profiles. It is designed to activate data across apps and supports zero-copy access to warehouse and lake data so teams can use more context without unnecessary duplication. Salesforce

Why this matters for Agentforce: Agents are only as useful as the context they can access. A customer-facing agent that sees only CRM notes is a demo. A production-grade agent needs customer history, entitlement data, product telemetry, order status, billing signals, policy rules, and often information outside Salesforce. That is why the system of context comes first.

Last-mile failure example: A service agent confidently approves a replacement—but the entitlement record is stale, the customer is out of coverage, and the wrong action gets executed. Users don’t blame “AI.” They blame the system.

2) System of work: Salesforce Customer 360

Customer 360 is the application layer: sales, service, marketing, commerce, and other workflows where work actually gets done. Salesforce describes it as a set of deeply unified apps built on the platform to provide a complete view of customers across touchpoints. Salesforce

Why this matters for Agentforce: Agents cannot create enterprise value unless they operate inside real processes. In practice, that means case resolution, opportunity progression, quote follow-up, onboarding, field service updates, renewal plays, and escalation routing. AI should not sit beside work. It should be embedded in work.

3) System of agency: Salesforce Agentforce

Salesforce positions Agentforce as an enterprise agentic AI platform that brings together humans, applications, AI agents, and data, and gives teams tools to build, test, deploy, manage, and orchestrate agents at scale. Its guide also highlights the core building blocks used by technical teams: topics, instructions, actions, and the Atlas Reasoning Engine. Salesforce

Why this matters for IT leaders:

This is where governance becomes operational. You decide:

-

What an agent can do (and cannot do)

-

Which tools it may call, under what conditions

-

Confidence thresholds and escalation rules

-

Human handoff patterns for high-risk steps

-

Observability: how you measure performance and control drift over time

-2.png?width=830&height=690&name=_-%20visual%20selection%20(5)-2.png)

Where Informatica strengthens the architecture

Salesforce’s Informatica pages frame “trusted context” as the combination of entity definitions, hierarchies, relationships, quality rules, lineage, provenance, and policy metadata across systems. Informatica adds data catalog, governance, data quality, and MDM capabilities that help create a trusted “golden record.” Salesforce also explicitly states Informatica’s technology enhances Data 360, Agentforce, and MuleSoft with the pipeline and cleansing needed so data is trusted, clear, and actionable. Salesforce

For IT leaders, that changes the design conversation. The goal is not merely to connect Agentforce to more data. The goal is to connect Agentforce to governed, relevant, explainable data.

In practice, Informatica strengthens “trusted context” through:

-

Identity resolution + mastered records: Consistent customer/account views across systems

-

Hierarchy + relationship accuracy: Parent/child accounts, householding, B2B hierarchies

-

Data quality rules: Duplicates, missing fields, invalid values, standardization

-

Catalog + discoverability: What data exists, where it lives, what it means

-

Lineage + provenance: Audit-ready “where did this value come from?”

-

Policy + access metadata: What can be used, by whom, for which purpose

That is the difference between an impressive answer and a dependable action.

-2.png?width=649&height=551&name=_-%20visual%20selection%20(6)-2.png)

The pattern we see repeatedly in enterprise AI programs

-

Story: The pilot works. Stakeholders are excited.

-

Problem: Rollout stalls because context is incomplete, permissions are inconsistent, or actions break across systems.

-

Solution: Redesign the rollout around context + work + control, not around the model alone.

The sequence that scales:

- Data 360 unifies and activates context

- Informatica improves data quality, governance, metadata, and mastered records

- Customer 360 supplies the business workflows and user touchpoints

- Agentforce reasons, decides, and acts within clear policy boundaries.

That sequence matters. McKinsey has reported that gen AI adoption rose sharply as organizations started to generate real value, which is a reminder that scale depends less on experimentation alone and more on operating discipline. McKinsey

IDC’s CX research also points to customer experience as a leading investment focus, which is why many first production use cases appear in service, support, and revenue operations. IDC

Mini runbook: How to move from demo to mission-critical deployment

-3.png?width=1047&height=685&name=_-%20visual%20selection%20(2)-3.png)

Phase 1 (1–2 weeks): Define the bounded use case

Pick one workflow with measurable impact:

-

Service case deflection

-

Renewal risk intervention

-

Sales follow-up automation

-

Order status resolution

Definition of done: An entity/field map with authoritative sources and “must-have vs nice-to-have” context.

Phase 2 (1–2 weeks): Map the context model

Document the minimum context needed:

-

Customer profile

-

Account hierarchy

-

Product or asset data

-

Order and billing status

-

Policy and eligibility rules

-

Consent and access controls

Definition of done: An entity/field map with authoritative sources and “must-have vs nice-to-have” context.

Phase 3 (2–3 weeks): Establish trust controls

Validate and Operationalize:

-

Data quality thresholds

-

Golden record ownership

-

Lineage and provenance

-

Role-based access

-

Escalation rules

-

Auditability

Definition of done: Documented controls + logging plan + approval gates for agent actions.

Phase 4(2–4 weeks): Connect the system of work

Embed agent actions into actual processes:

-

Case updates

-

Task creation

-

Next-best actions

-

Approvals

-

Human handoff

Definition of done: An “action catalog” defining allowed actions, constraints, and required validations per action.

Phase 5 (ongoing weekly): Instrument and iterate

Track outcomes weekly and refine:

-

Instructions

-

Actions

-

Context windows

-

Exception paths

-

Human review points

Definition of done: A weekly ops cadence with dashboards, incident review, and controlled releases.

KPIs to track

Choose metrics that reflect business + operational value:

- Autonomous resolution / containment rate

- Average handling time (AHT) or time to resolution

- Trusted context coverage (how often required context is available and high-confidence)

- Human escalation rate (and reasons)

- CSAT/NPS for agent-assisted journeys.

-1.png?width=924&height=355&name=_-%20visual%20selection%20(8)-1.png)

Common pitfalls

- Treating Agentforce as the whole strategy Agentforce is powerful, but it is the agency layer, not the entire operating model. Without context and workflow integration, it will struggle to scale.

- Feeding agents low-trust dataBad hierarchy data, duplicate records, missing entitlements, and unclear lineage create failure modes that users experience immediately. Informatica’s emphasis on governance, quality, and MDM is especially relevant here.

- Over-automating before governance is readyNot every action should be autonomous on day one. Start with bounded authority and clear human fallback.

- Measuring novelty instead of operational valueDemo applause is not a KPI. Resolution, speed, quality, and trust are.

ANALYST INSIGHTS

Gartner says that by 2029, agentic AI will autonomously resolve 80% of common customer service issues without human intervention, reducing operational costs by 30%. Gartner

What this means: the market signal is clear: enterprise agents are moving from assistive to operational. IT leaders should design for governance, observability, and process integration now, not after rollout.

McKinsey

McKinsey reports that 65% of organizations are regularly using gen AI, nearly double the level seen ten months earlier. McKinsey

What this means: adoption is no longer the hard part. Differentiation now comes from implementation quality—especially data readiness, workflow fit, and value measurement.

IDC

IDC notes that delivering great customer experience ranked as the top focus area to derive customer value in its cited FERS survey context. IDC

What this means: service and customer-facing workflows remain the most practical starting point for Agentforce, because the business case is already strong and measurable.

Agentforce becomes mission-critical only when it is wired to trusted context, embedded in real workflows, and governed like any other production system.

If your team is working through the last-mile challenge—turning Agentforce from a promising pilot into a reliable enterprise capability—Pacific Data Integrators can help you blueprint the rollout.

Book a demo with Pacific Data Integrators to explore how to connect trusted context, Salesforce workflows, and production-grade agent operations.

Blog Post by PDI Marketing Team

Pacific Data Integrators Offers Unique Data Solutions Leveraging AI/ML, Large Language Models (Open AI: GPT-4, Meta: Llama2, Databricks: Dolly), Cloud, Data Management and Analytics Technologies, Helping Leading Organizations Solve Their Critical Business Challenges, Drive Data Driven Insights, Improve Decision-Making, and Achieve Business Objectives.